After I published the blog posts about the sample IT maps a few weeks back, questions started to come in about how those maps could be created for ICS/SCADA deployments.

After I published the blog posts about the sample IT maps a few weeks back, questions started to come in about how those maps could be created for ICS/SCADA deployments.

Monthly Archives: January 2012

MicroSolved, Inc. Releases Free Tool To Expose Phishing

MSI’s new tool helps organizations run their own phishing tests from the inside.

MSI’s new tool helps organizations run their own phishing tests from the inside.

We’re excited to release a new, free tool that provides a simple, safe and effective mechanism for security teams and administrators to run their own phishing tests inside their organization. They simply install the application on a server or workstation and create a url email/sms/etc. campaign to entice users to visit the site. They can encode the URLs, mask them, or shorten them to obfuscate the structures if they like.

The application is a fully self contained web mechanism, so no additional applications are required. There is no need to install and configure IIS, Apache and a database to manage the logs. All of the tools needed are built into the simple executable, which is capable of being run on virtually any Microsoft Windows workstation or server.

If a user visits the tool’s site, their session will create a log entry as a “bite”, with their IP address in the log. Visitors who actually input a login and password will get written to the log as “caught”, including their IP address, the login name and the first 3 characters of the password they used.

Only the first 3 characters of the password are logged. This is enough to prove useful in discussions with users and to prove their use, but not enough to be useful in further attacks. The purpose of this tool is to test, assess and educate users, not to commit fraud or gather real phishing data. For this reason, and for the risks it would present to the organization, full password capture is not available in the tool and is not logged.

“Organizations can now easily, quickly and safely run their own ongoing phishing campaigns. Instead of worrying about the safety of gathering passwords or the budget impacts of hiring a vendor to do it for them, they can simply ‘click and phish’ their way to higher security awareness.”, said Brent Huston, CEO & Security Evangelist of MicroSolved. “After all, give someone a phish and they’re secure for a day, but teach someone to phish and they might be secure for a lifetime…”, Mr. Huston laughed.

The tool can be downloaded by visiting this link or by visiting MSI’s website.

Focus On Input Validation

Input validation is the single best defense against injection and XSS vulnerabilities. Done right, proper input validation techniques can make web-applications invulnerable to such attacks. Done wrongly, they are little more than a false sense of security.

The bad news is that input validation is difficult. White listing, or identifying all possible strings accepted as input, is nearly impossible for all but the simplest of applications. Black listing, that is parsing the input for bad characters (such as ‘, ;,–, etc.) and dangerous strings can be challenging as well. Though this is the most common method, it is often the subject of a great deal of challenges as attackers work through various encoding mechanisms, translations and other avoidance tricks to bypass such filters.

Over the last few years, a single source has emerged for best practices around input validation and other web security issues. The working group OWASP has some great techniques for various languages and server environments. Further, vendors such as Sun, Microsoft and others have created best practice articles and sample code for doing input validation for their servers and products.

Check with their knowledge base or support teams for specific information about their platform and the security controls they recommend. While application frameworks and web application firewalls are evolving as tools to help with these security problems, proper developer education and ongoing training of your development team about input validation remains the best solution.

MSI Strategy & Tactics Talk Ep. 21: The Penetration Testing Execution Standard

Penetration Tests have been done for years but yet there has often been confusion regarding what a penetration test should deliver. Enter the beta release of the Penetration Testing Execution Standard (PTES). What does it mean? In this episode of MSI Strategy & Tactics, the techs discuss the current state of penetration tests and how PTES is a good idea that will benefit many organizations. Take a listen! Discussion questions include:

- What is PTES? How does it differ from the current state of the industry?

- What is the importance of industry standardization? Is it a good thing or a bad thing?

- What does it mean for the future of vulerability and penetration testing?

Click the embedded player to listen. Or click this link to access downloads. Stay safe!

PIPA/SOPA/Etc. Will Speed Up the Crime Stream

Today, many sites are protesting PIPA/SOPA and the like. You can read Google or Wikipedia for why those organizations and thousands of others are against the approach of these laws. But, this post ISN’T about that. In fact, censorship aside, I am personally and professionally against these laws for an entirely different reason all together.

My reason is this; they will simply speed up the crime stream. They will NOT shut down pirate sites or illicit trading of stolen data. They will simply force pirates, thieves and data traders to embrace more dynamic architectures and mechanisms for their crimes. Instead of using web sites, they will revert to IRC, bot-net peering, underground message boards and a myriad of other ways that data moves around the planet. They will move here, laws will pass to block that, they will move there, lather, rinse and repeat…

In the meantime, piracy, data theft, data trading and online crimes will continue to grow unabated, as they will without PIPA/SOPA/Etc. Nary a dent will be made in the amount or impact of these crimes. Criminals already have the technology and incentives to create more dynamic, adaptable and capable tools to defy the law than we have to marshall against them in enforcing the law.

After all that, what are we left with? A faster, more agile set of criminals who will actively endeavor to shorten the value chain of data, including intellectual property like movies, music and code. They will strive to be even faster to copy and spread their stolen information, creating even more technology that will need to be responded to with the “ban hammer”. The cycles will just continue, deepen and quicken, eventually stifling legitimate innovation and technology.

Saddest of all, once we determine that the legislative process was ineffective against the crime they sought to curtail, we still will have a loss of speech during that time, even if the laws were to ever be repealed. That’s right, censorship has a lasting effect, and we might lose powerful ideas, ideals and potentially world changing innovations during the time when people feel they are being censored. We lose all of that, even without a single long term gain against crime.

Given the impacts I foresee from these laws, I can not support them. I do believe in free speech. I do believe in free commerce on the Internet as a global enabler. But all of those reasons aside, I SIMPLY DO NOT BELIEVE that these laws will in any way affect the long term criminal viability or capability of pirates, thieves and data traders. Law is simply not capable of keeping pace with their level of innovation, adaptation and incentives. I don’t know what the answer is, I just know that this approach is not likely to be it.

So, that said, feel free to comment below on your thoughts on the impacts of these laws. If you are against the enactment of these laws, please contact your representatives in Congress and make your voice known. As always, thanks for reading and stay safe out there!

These are my opinions, as an individual – Brent Huston, and as an expert on information security and cyber-crime. They do not represent the views of any party, group or organization other than myself.

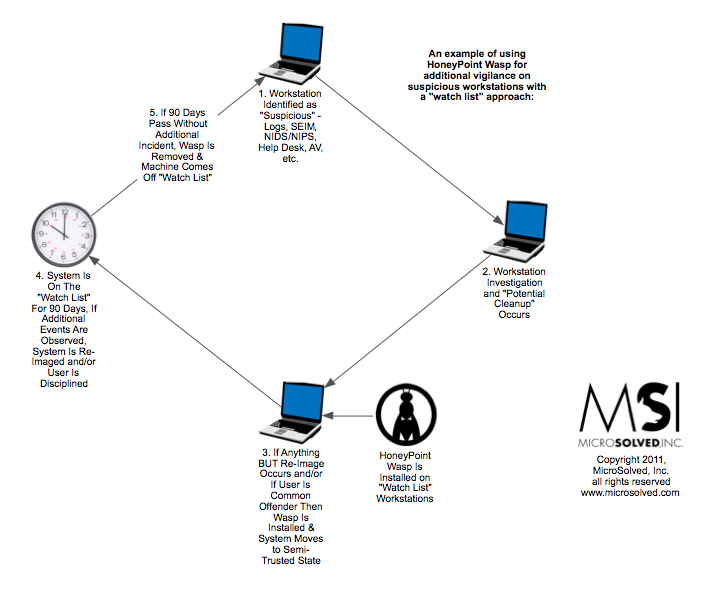

Quick Use Case for HoneyPoint Wasp

Several organizations have begun to deploy HoneyPoint Wasp as a support tool for malware “cleanup” and as a component of monitoring specific workstations and servers for suspicious activity. In many cases, where the help desk prefers “cleanup” to turn and burn/re-image approaches, this may help reduce risk and overall threat exposures by reducing the impact of compromised machines flowing back into normal use.

Here is a quick diagram that explains how the process is being used. (Click here for the PDF.)

If you would like to discuss this approach in more detail, feel free to give us a call to arrange a one on one session with an engineer. There are many ways that organizations are leveraging HoneyPoint technology as a platform for nuance detection. Most of them increase the effectiveness of the information security program and even reduce the resources needed to manage infosec across the enterprise!

Snort and SCADA Protocol Checks

Recently, ISC Diary posted this story about Snort 2.9.2 now supporting SCADA protocol checks. Why is this good news for SCADA?

Because it is a lower cost source of visibility for SCADA operators. Snort is free and a very competitive solution. There are more expensive commercial products out there, but they are more difficult to manage and have less of a public knowledge base and tools/options than Snort. Many security folks are already familiar with Snort, which should lower both the purchase and operational cost of this level of monitoring.

Those who know how to use Snort can now contribute directly to more effective SCADA monitoring. Basically, people with Snort skills are more prevalent, so it becomes less expensive to support the product, customize it to their specific solution and manage it over time. There are also a wide variety of open source add-ons, and tools that can be leveraged around Snort, making it a very reasonable cost, yet powerful approach to visibility. Having people in the industry who know how the systems work and who know how Snort works allows for better development of signatures for various nefarious issues.

It is likely to be a good detection point for SCADA focused malware and manual probes. The way these new signatures are written allows them to look for common attacks that have already been publicly documented. The tool should be capable of identifying them and can do so with ease. In terms of trending malware, (not currently) these attack patterns have been known for some time.

The specifics of the probes are quite technical and we would refer readers to the actual Snort signatures for analysis if they desire.

By learning the signatures of various threats to the industry, people in the field can translate that into Snort scripts which can detect those signatures on the network and make the proper parties aware in a timely manner. Snort has the flexibility (in the hands of someone who knows how to use it) to be molded to fit the needs of nearly any network.

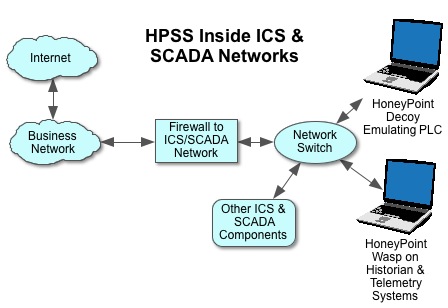

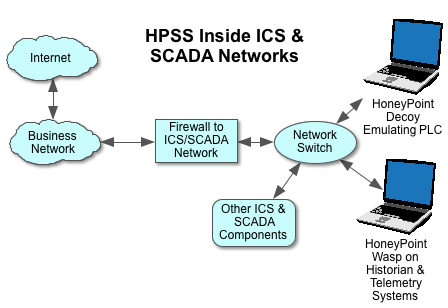

It makes an excellent companion tool to a deployment of HoneyPoint deep inside SCADA and ICS networks. In this case, Snort is usually deployed on the internal network segment of the ICS/SCADA firewall, plugged into the network switch. HPSS is as shown.

If you’re looking for a low-cost solution and plenty of functionality for your SCADA, this recent development is a welcome one!

What the Heck Is FeeLCoMz?

Quick Use Case for HoneyPoint in ICS/SCADA Locations

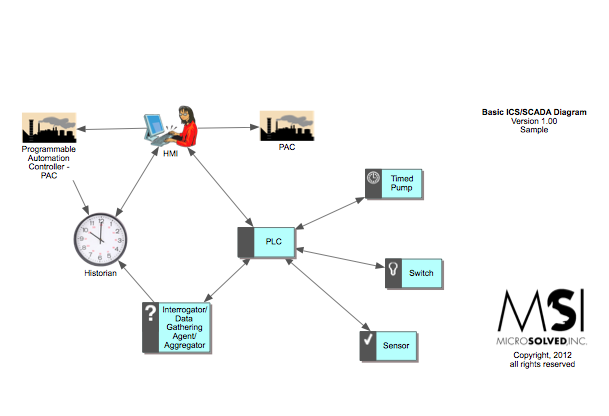

This quick diagram shows a couple of ways that many organizations use HoneyPoint as a nuance detection platform inside ICS/SCADA deployments today.

Basically, they use HoneyPoint Agent/Decoy to pick up scans and probes, configuring it to emulate an endpoint or PLC. This is particularly useful for picking up wider area scans, probes and malware propagations.

Additionally, many organizations are finding value in HoneyPoint Wasp, using its white list detection capabilities to identify new code running on HMIs, Historian or other Windows telemetry data systems. In many cases, Wasp can quickly and easily identify malware, worms or even unauthorized updates to the ICS/SCADA components.

The Smart Grid Raises the Bar for Disaster Recovery

As we present at multiple smart grid and utility organizations, many folks seem to be focusing on the confidentiality, integrity, privacy and fraud components of smart grid systems.

Our lab is busily working with a variety of providers, component vendors and other folks doing security assessments, code review and penetration testing against a wide range of systems from the customer premise to the utility back office and everything in between. However, we consistently see many organizations under estimating the costs and impacts of disaster recovery, business continuity and other efforts involved in responding to issues when the smart grid is in play.

For example, when asked about smart meter components recently, one of our water concerns had completely ignored the susceptibility of these computer devices to water damage in a flood or high rain area. Seems simple, but even though the devices are used inside in-ground holes in neighborhoods, the idea of what happens when they are exposed to water had never been discussed. The vendor made a claim that the devices were “water resistant”, but that is much different than “water proof”. Filling a tub with water and submerging a device quickly demonstrated that the casing allowed a large volume of water into the device and that when power was applied, the device simply shorted in what we can only describe as “an interesting display”.

The problem with this is simple. Sometimes areas where this technology is eventually intended to be deployed will experience floods. When that happens, the smart meter and other computational devices may have to be replaced en masse. If that happens, there is a large cost to be considered, there are issues with labor force availability/safety/training and there are certainly potential issues with vendor supply capabilities in the event of something large scale (like Hurricane Katrina in New Orleans).

Many of the organizations we have talked to simply have not begun the process of adjusting their risk assessments, disaster plans and the like for these types of operational requirements, even as smart grid devices begin to proliferate across the US and global infrastructures.

There are a number of other examples ranging from petty theft (computer components have after market value & large scale theft of components is probable in many cases) to outright century events like hurricanes, floods, earthquakes and tornados. The bottom line is this – smart grid components introduce a whole new layer of complexity to utilities and the infrastructure. Now is the time for organizations considering or already using them to get their heads and business processes wrapped around them in today’s deployments and those likely to emerge in the tomorrows to come.