AI Agents Are Not Applications. They Are Digital Workers.

Most organizations are adopting AI agents faster than they are learning how to govern them.

That is the problem.

A chatbot that answers questions is one thing. An AI agent that can access business data, use tools, trigger workflows, generate artifacts, make recommendations, or alter enterprise state is something else entirely.

At that point, the organization is no longer just deploying software.

It is introducing a new kind of operational actor.

That actor needs identity.

It needs boundaries.

It needs oversight.

It needs evidence.

It needs a human owner.

It needs a kill switch.

In other words, AI agents must be managed more like digital workers than ordinary applications.

The Governance Gap Is Already Here

Across enterprises, mid-market firms, and small businesses, the same pattern is emerging:

- Business teams are experimenting with agent workflows.

- Security teams are trying to understand the new control surface.

- Legal and HR teams are still catching up.

- Executives want productivity gains without slowing the business down.

- Audit, compliance, and risk teams are asking for evidence that often does not exist.

The dangerous assumption is that existing software governance, SaaS controls, service accounts, and general “responsible AI” policies will be enough.

They usually will not be.

AI agents create new questions:

- Who or what is this agent in the enterprise?

- What systems can it touch?

- What decisions can it influence?

- What actions can it take without human approval?

- What evidence exists if something goes wrong?

- Who owns the agent’s behavior?

- How do we suspend, investigate, or retire it?

If leadership cannot answer those questions, the organization does not yet govern its agents.

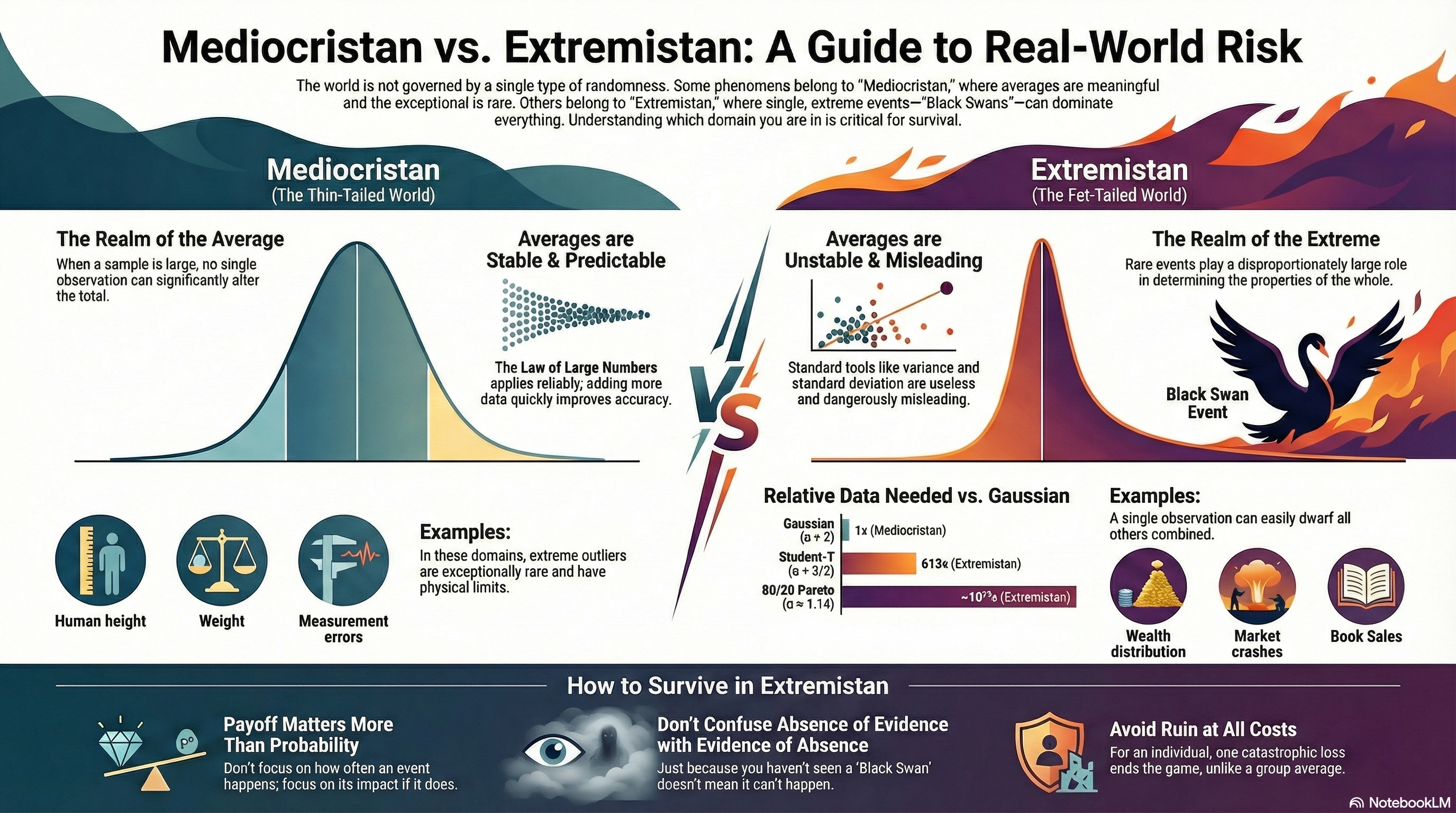

Why Traditional Software Governance Falls Short

Traditional software governance usually assumes that applications behave within relatively stable boundaries.

Someone writes the code.

Someone approves the deployment.

Someone grants access.

The system then performs the tasks it was designed to perform.

AI agents are different.

They interpret instructions. They infer next steps. They retrieve context. They call tools. They may chain actions together. They can create outputs that look polished and authoritative even when they are incomplete, wrong, or unsafe.

That changes the risk model.

The critical question is no longer simply:

“Can the system perform the task?”

The better question is:

“What happens when the agent performs the task incorrectly, partially, opaquely, or adversarially?”

That is where governance has to catch up.

The Six Planes of Agent Control

In the full e-book, I introduce a practical model called the six planes of agent control:

- Identity — Who is this agent in the enterprise?

- Policy — What is it allowed to do?

- Tool — What can it touch?

- Runtime — Where and how does it execute?

- Observability — What evidence exists about its behavior?

- Governance — Who approved it, owns it, reviews it, and can stop it?

This model gives executives, CISOs, boards, engineering teams, HR, legal, and GRC functions a shared language for managing agentic AI before uncontrolled adoption creates avoidable risk.

It also forces a hard but necessary shift:

Stop governing only the application.

Start governing the actor-like behavior.

Why This Matters Now

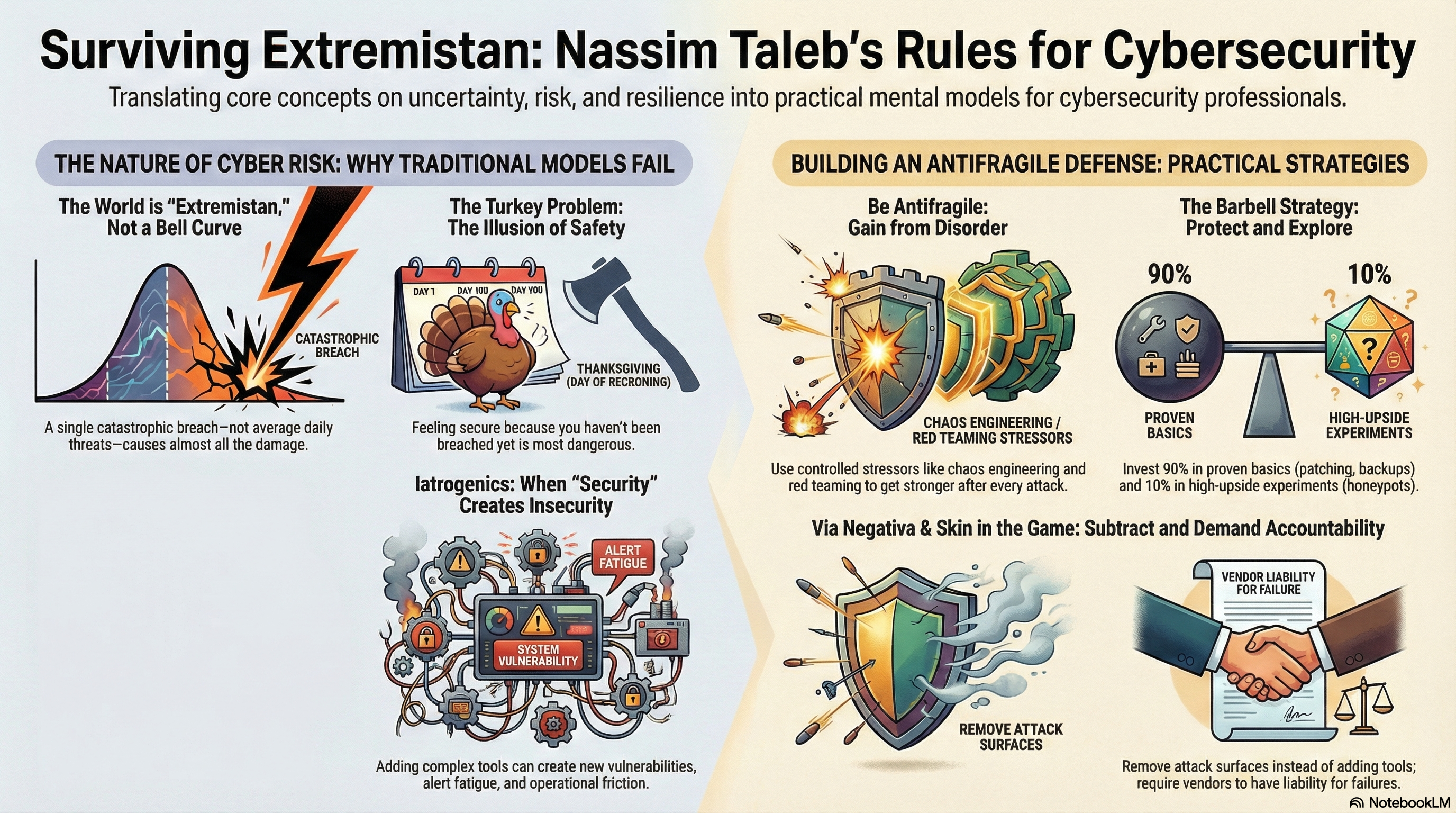

The answer is not to reject AI.

That would be strategically weak.

The answer is also not to let every department wire agents into business workflows with broad access, vague accountability, weak logging, and no structured review.

That would be reckless.

The rational path is selective adoption with governance first.

Organizations that get this right will be able to move faster because they can prove where agents exist, what authority they have, what controls apply, and how failures will be contained.

Organizations that get it wrong will eventually face the predictable consequences:

- unclear accountability

- invisible privilege paths

- poor evidence

- data exposure

- automation bias

- workflow drift

- legal ambiguity

- emergency cleanup after controls should have been designed in from the beginning

This is not a theoretical problem. It is already showing up in real adoption patterns.

Download the Full E-Book

I have released a new e-book:

AI Agents Management Framework: Policy, Procedure, and Governance Controls for Managing AI Agents as Digital Workers

Inside, you will find:

- A governance-first model for selective AI adoption

- The six planes of agent control

- Identity, access, evidence, and oversight patterns

- Practical guidance for executives, CISOs, boards, HR, legal, engineering, and GRC teams

- Case narratives showing what we are seeing across large enterprises, mid-market firms, and small businesses

- Sample policies, procedures, risk tiering worksheets, Agent System Record templates, autonomy budget examples, incident response addenda, and offboarding guidance

The central idea is simple:

If you govern agents like applications, you are governing the wrong thing.

To download the full e-book, register here:

https://signup.microsolved.com/ai-management-e-book/

What You’ll Get When You Register

- A practical AI-agent governance blueprint

Download the full AI Agents Management Framework e-book and learn how to treat AI agents as managed digital workers, not ordinary applications. The framework helps leaders define ownership, authority, access, oversight, evidence, and shutdown procedures before agent workflows create unmanaged risk. - Actionable controls you can adapt immediately

The e-book includes practical models for identity, policy, tool access, runtime controls, observability, governance, risk tiering, autonomy budgets, Agent System Records, performance reviews, incident response, and agent offboarding. - Executive-ready guidance for safer AI adoption

Use the framework to help boards, executives, CISOs, HR, legal, engineering, and GRC teams align around a clear operating model for selective AI adoption, stronger accountability, and verifiable control.

About MicroSolved

MicroSolved, Inc. helps organizations improve security, governance, resilience, and operational trust in complex technology environments.

This e-book extends that work into AI-agent governance, with a focus on practical controls for identity, access, oversight, auditing, and enterprise operating model design.