A Breach That Didn’t Break In — It Logged In

The email looked routine.

A finance employee received a vendor payment request — well-written, contextually accurate, referencing an actual project. Nothing screamed “phish.” Attached was a short voice note from the CFO explaining the urgency.

The voice sounded right. The cadence, the phrasing — even the subtle impatience.

Moments later, a multi-factor authentication (MFA) prompt appeared. The employee approved it without thinking. They had approved dozens that week. Habit is powerful.

The breach didn’t bypass the firewall.

It didn’t exploit a zero-day vulnerability.

It didn’t even evade detection.

It bypassed identity confidence.

By the time the security team noticed anomalous financial transfers, the attacker had already authenticated, escalated privileges, and pivoted laterally — all using valid credentials.

In 2026, attackers aren’t breaking in.

They’re logging in.

And that reality demands a shift in how we think about security architecture. Zero Trust was a necessary evolution. But in many organizations, it’s stalled at the network layer. Meanwhile, identity has quietly become the primary control plane — and the primary attack surface.

If identity is where trust decisions happen, then identity is where risk must be engineered out.

Zero Trust Isn’t Enough Anymore

Zero Trust began as a powerful principle: “Never trust, always verify.” It challenged perimeter-centric thinking and encouraged segmentation, least privilege, and continuous validation.

But somewhere along the way, it became a marketing label.

Many implementations focus heavily on:

-

Network micro-segmentation

-

VPN replacement

-

Device posture checks

-

SASE rollouts

All valuable. None sufficient.

Because identity remains the weakest link.

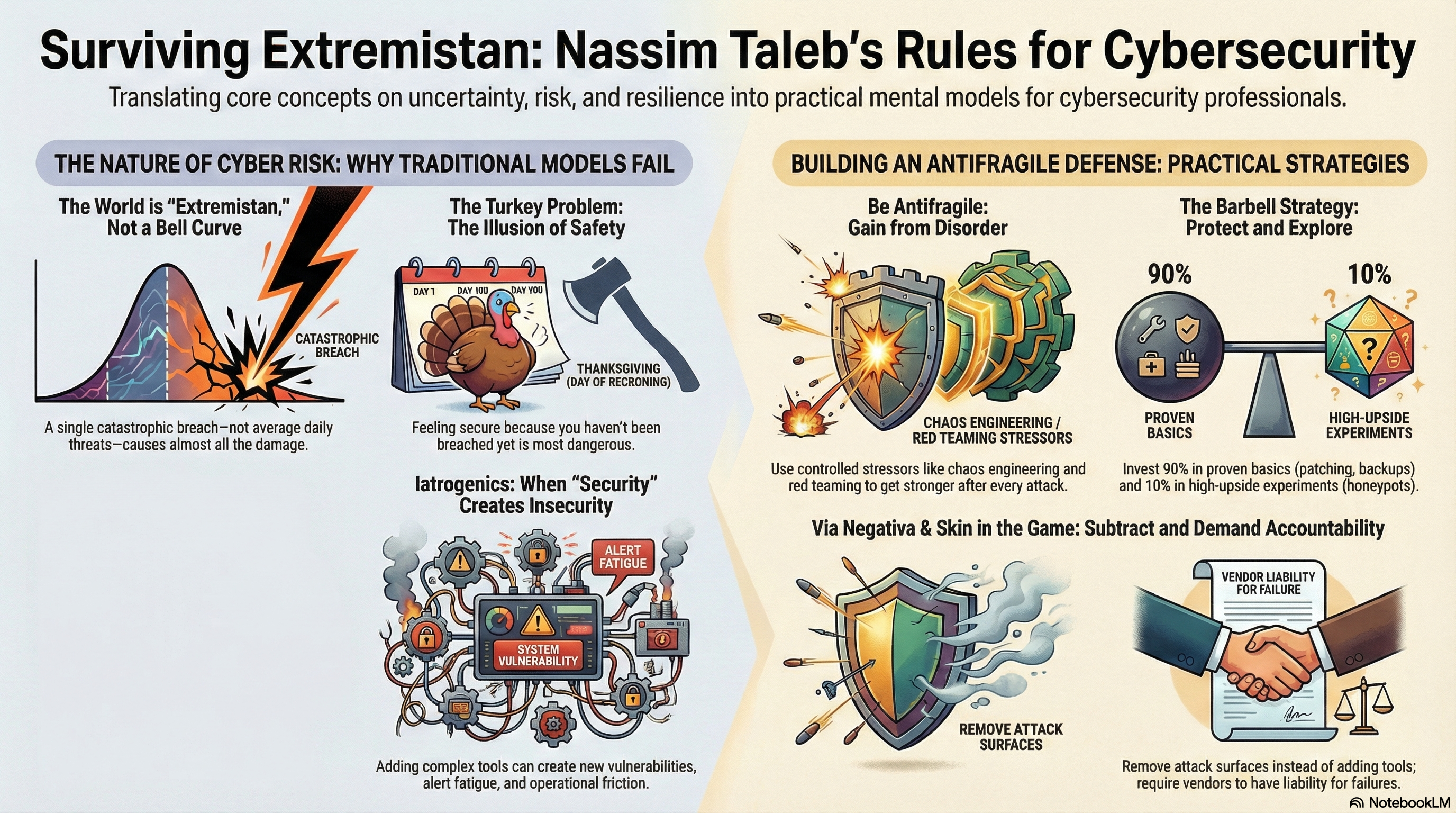

AI Has Changed the Identity Battlefield

Attackers now leverage AI to:

-

Craft highly personalized spear phishing emails

-

Generate convincing deepfake audio and video impersonations

-

Launch MFA fatigue campaigns at scale

-

Automate credential stuffing with adaptive logic

The tools available to adversaries have industrialized social engineering.

Push-based MFA, once considered strong protection, is now routinely abused through prompt bombing. Deepfake impersonation erodes human intuition. Credential reuse remains rampant.

Perimeter thinking has died.

Device-centric thinking is incomplete.

Identity is now the primary control plane.

If identity is the new perimeter, it must be treated like critical infrastructure — not a checkbox configuration in your IAM console.

The Identity-First Security Framework

An identity-first strategy doesn’t abandon Zero Trust. It operationalizes it — with identity at the center of risk reduction.

Below are five pillars that move identity from access management to risk engineering.

Pillar 1: Reduce the Identity Attack Surface

A simple Pareto principle applies:

20% of identities create 80% of risk.

Privileged users. Service accounts. Automation tokens. Executive access. CI/CD credentials.

The first step isn’t detection. It’s reduction.

Actions

-

Inventory all identities — human and machine

-

Eliminate dormant accounts

-

Reduce standing privileges

-

Enforce just-in-time (JIT) access for elevated roles

Standing privilege is latent risk. Every persistent admin account is a pre-approved breach path.

Metrics That Matter

-

Percentage of privileged accounts

-

Average privilege duration

-

Dormant account count

-

Privileged access review frequency

Organizations that aggressively reduce identity sprawl see measurable decreases in lateral movement potential.

Reducing exposure is step one.

Validating behavior is step two.

Pillar 2: Continuous Identity Verification — Not Just MFA

MFA is necessary. It is no longer sufficient.

Push-based MFA fatigue attacks are common. Static authentication events assume trust after login. Attackers exploit both.

We must shift from event-based authentication to session-based validation.

Move Beyond:

-

Blind push approvals

-

Static login checks

-

Binary allow/deny thinking

Add:

-

Risk-based authentication

-

Device posture validation

-

Behavioral biometrics

-

Continuous session monitoring

Attackers use AI to simulate legitimacy.

Defenders must use AI to detect deviation.

Useful Metrics

-

MFA approval anomaly rate

-

Impossible travel detections

-

Session risk score trends

-

High-risk login percentage

Authentication should not be a moment. It should be a monitored process.

Pillar 3: Identity Telemetry & Behavioral Baselines

First-principles thinking:

What is compromise?

It is behavior deviation.

A legitimate user logging in from a new country at 3:00 a.m. and accessing sensitive financial systems may have valid credentials — but invalid behavior.

Implementation Steps

-

Build per-role behavioral baselines

-

Track privilege escalation attempts

-

Integrate IAM logs into SOC workflows

-

Correlate identity data with endpoint and cloud telemetry

Second-order thinking matters here.

More alerts without tuning leads to burnout.

Identity alerts must be high-confidence. Behavioral models must understand role context, not just user anomalies.

Security teams should focus on detecting intent signals — not just login events.

Pillar 4: Machine Identity Governance

Machine identities often outnumber human identities in cloud-native environments.

Consider:

-

Service accounts

-

API tokens

-

Certificates

-

CI/CD pipeline credentials

-

Container workload identities

AI-powered attackers increasingly target automation keys. They know that compromising a service account can provide persistent, stealthy access.

Critical Actions

-

Automatically rotate secrets

-

Shorten token lifetimes

-

Continuously scan repositories for hardcoded credentials

-

Enforce workload identity controls

Key Metrics

-

Average token lifespan

-

Hardcoded secret discovery rate

-

Machine identity inventory completeness

-

Unused service account count

Machine identities do not get tired. They also do not question unusual requests.

That makes them both powerful and dangerous.

Pillar 5: Identity Incident Response Playbooks

Identity compromise spreads faster than traditional breaches because authentication grants implicit trust.

Incident response must evolve accordingly.

Include in Playbooks:

-

Immediate token invalidation

-

Automated session termination

-

Privilege rollback

-

Identity forensics logging

-

Rapid behavioral reassessment

Identity Maturity Model

| Level | Capability |

|---|---|

| Level 1 | MFA + Basic IAM |

| Level 2 | JIT Access + Risk-based authentication |

| Level 3 | Behavioral detection + Machine identity governance |

| Level 4 | Autonomous identity containment |

The future state is not manual triage.

It is autonomous identity containment.

Implementation Roadmap

Transformation does not require a multi-year overhaul. It requires disciplined sequencing.

First 30 Days

-

Conduct a full identity inventory audit

-

Launch a privilege reduction sprint

-

Review MFA configurations and eliminate push-only dependencies

-

Identify dormant and orphaned accounts

Immediate wins come from subtraction.

First 90 Days

-

Deploy risk-based authentication policies

-

Integrate identity telemetry into SOC workflows

-

Begin machine identity governance initiatives

-

Establish behavioral baselines for high-risk roles

Security operations and IAM teams must collaborate here.

Six-Month Horizon

-

Implement behavioral AI modeling

-

Automate session risk scoring

-

Deploy automated identity containment workflows

-

Establish executive reporting on identity risk metrics

Identity becomes measurable. Measurable becomes manageable.

Real-World Examples

Example 1: Privilege Reduction

One enterprise reduced privileged accounts by 42%. The measurable result: significant reduction in lateral movement pathways and faster containment during simulated breach exercises.

Example 2: MFA Fatigue Prevention

A financial services firm detected abnormal MFA approval timing patterns. Session anomaly detection flagged behavior inconsistent with historical norms. The attack was stopped before funds were transferred.

The lesson: behavior, not just credentials, determines legitimacy.

Measurable Outcomes

| Identity Control | Risk Reduced | Measurement Method |

|---|---|---|

| JIT Privilege | Lateral movement | Privilege duration logs |

| Risk-based MFA | Phishing success | Approval anomaly rate |

| Token rotation | Credential abuse | Token age metrics |

| Behavioral baselines | Account takeover | Session deviation scores |

| Machine identity inventory | Automation abuse | Service account audits |

Security leaders must shift from tool counts to risk-reduction metrics.

Identity Is the New Control Plane

Attackers scale with AI.

They automate reconnaissance. They generate deepfake executives. They weaponize credentials at industrial scale.

Defenders must scale identity intelligence.

In 2026, the organizations that win will not be those with the most tools. They will be those who understand that identity is infrastructure.

Firewalls inspect traffic.

Endpoints enforce policy.

Identity determines authority.

And authority is what attackers want.

Zero Trust was the beginning. Identity-first security is the evolution.

The question is no longer whether your users are inside the perimeter.

The question is whether your identity architecture assumes breach — and contains it automatically.

Info & Help: Advancing Your Identity Strategy

Identity-first security is not a product deployment. It is an operational discipline.

If your organization is:

-

Struggling with privilege sprawl

-

Experiencing MFA fatigue attempts

-

Concerned about AI-driven impersonation

-

Lacking visibility into machine identities

-

Unsure how to measure identity risk

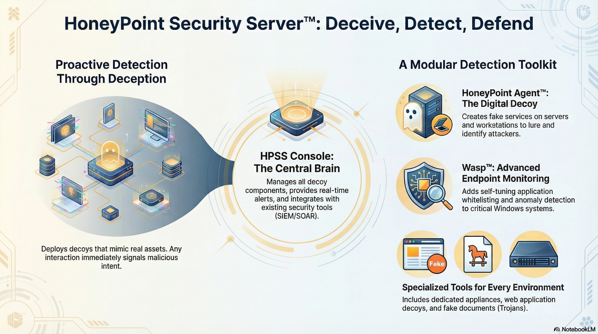

The team at MicroSolved, Inc. can help.

For over three decades, MicroSolved has assisted enterprises, financial institutions, healthcare providers, and critical infrastructure organizations in strengthening identity governance, incident response readiness, and security operations maturity.

Our services include:

-

Identity risk assessments

-

Privileged access reviews

-

IAM architecture design

-

SOC integration and telemetry tuning

-

Incident response planning and tabletop exercises

If identity is your new control plane, it deserves engineering rigor.

Reach out to MicroSolved to discuss how to reduce measurable identity risk — not just deploy another control.

Security is no longer about keeping attackers out.

It’s about making sure that when they log in, they don’t get far.

* AI tools were used as a research assistant for this content, but human moderation and writing are also included. The included images are AI-generated.